|

Apple's latest low-cost iPhone launched yesterday, and we picked up the iPhone 17e to see how it compares to the iPhone 16e that came before it, and how it measures up to the iPhone 17 lineup.

|

|

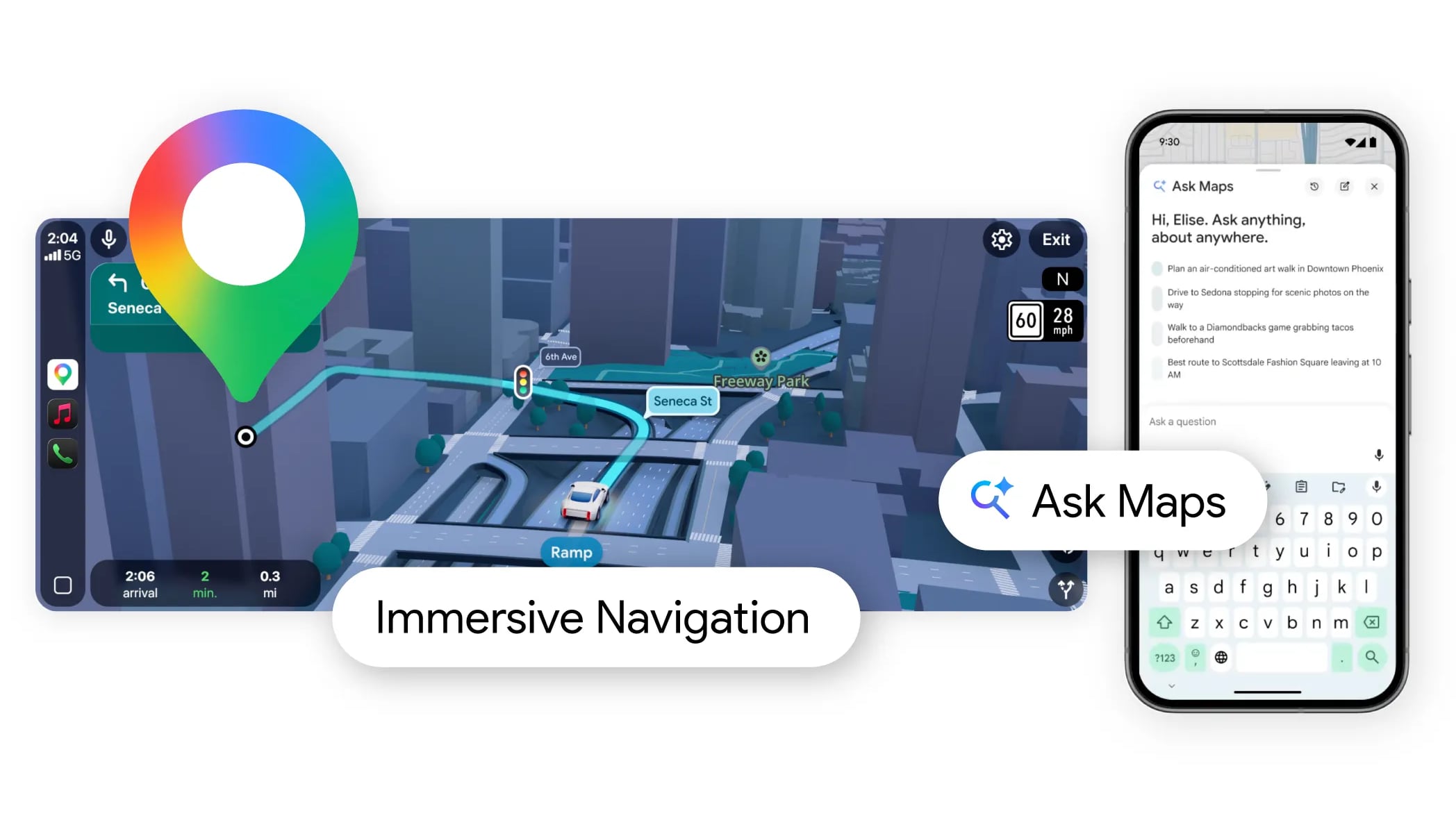

Google today added Gemini AI to Google Maps, enabling a new Ask Maps feature. Gemini in maps can answer complex, real-world questions that Google says "a map could never answer before." Google today added Gemini AI to Google Maps, enabling a new Ask Maps feature. Gemini in maps can answer complex, real-world questions that Google says "a map could never answer before."

|

|

Apple's new M5 MacBook Air and M5 Pro/M5 Max MacBook Pro just launched yesterday, and now Amazon has the first cash discounts on these models. You'll find $49 off nearly every new MacBook model on Amazon, without the need of a membership or clipping a coupon. Apple's new M5 MacBook Air and M5 Pro/M5 Max MacBook Pro just launched yesterday, and now Amazon has the first cash discounts on these models. You'll find $49 off nearly every new MacBook model on Amazon, without the need of a membership or clipping a coupon.

|

|

Flash floods are notoriously difficult to predict, but Google might have a novel solution. The company just revealed Groundsource, a prediction tool for flash floods that uses Gemini to source data from old news reports. This is the first time it has used a language model for this type of work.

This provides a massive,…

— Google Research (@GoogleResearch) March 12, 2026

Google tasked Gemini with sorting through 5 million news articles from around the world and isolating flood reports. It transformed this data into a geo-tagged series of chronological events. Next, researchers trained a model to ingest current weather forecasts and leverage the Groundsource data to determine the likelihood of a flash flood in a given area.

We don't have any concrete information as to how accurate Google's forecast model is, though that should come over time. One trial user did say it helped his organization respond quicker to localized weather events. For now, the company is highlighting risks for urban areas in 150 countries via its

|

|

Samsung delivers modest but meaningful upgrades to the Ultra's design, cameras and battery. And yes, the phone is packed with new AI features -- and most of them are actually pretty useful.

|

|

Amazon just unveiled a new personality type for Alexa . The "sassy" option is reserved for adults and the company claims it will throw out censored curse words from time to time. Amazon describes this option as a combination of "unfiltered personality" and "razor-sharp wit, playful sarcasm and occasional censored profanity."

We aren't yet sure how the chatbot handles the censoring. Does it use a garden variety bleep or a replacement word like fudge or something? I managed to get it to say "damn" and "hell", but couldn't force anything more profane than that.

In any event, adult users have to jump through a couple of hoops to activate this mode. It won't work if there's an enabled Amazon Kids profile on the account and it requires additional security checks, like face scans. The company also warns people upon being selected that the new tone could contain "mature subject matter." I'm more afraid of the bot using "clever comebacks" to absolutely shred my buying habits. Yes, I buy bagged popcorn when I have plenty of uncooked kernels in the pantry. I'm working on it.

This is still Alexa , despite the ability to drop colorful language every now and again. It's not an adult AI companion like the anime-inspired weirdness Grok recently trotted out or whatever erotica-infused nonsense OpenAI has been working on. Also, Amazon says the bot won't get involved with

|

|

Weekly MLB games are set to return to the Apple TV subscription service on Friday, March 27, Apple said today. The fifth Friday Night Baseball season will begin with the Los Angeles Angels facing off against the Houston Astros, followed by the Cleveland Guardians playing against the Seattle Mariners. Weekly MLB games are set to return to the Apple TV subscription service on Friday, March 27, Apple said today. The fifth Friday Night Baseball season will begin with the Los Angeles Angels facing off against the Houston Astros, followed by the Cleveland Guardians playing against the Seattle Mariners.

|

|

Apple's Mac lineup will soon span a wider price range than ever, from the new $599 MacBook Neo to a rumored top-of-the-line MacBook "Ultra" expected later this year. However, new research suggests the broader laptop market could be heading for a painful price adjustment. Apple's Mac lineup will soon span a wider price range than ever, from the new $599 MacBook Neo to a rumored top-of-the-line MacBook "Ultra" expected later this year. However, new research suggests the broader laptop market could be heading for a painful price adjustment.

|

|

With the Studio Display and ?Studio Display? XDR set to launch on Wednesday, members of the media have started publishing their reviews of the new display options. With the Studio Display and ?Studio Display? XDR set to launch on Wednesday, members of the media have started publishing their reviews of the new display options.

|

|