|

In the summer of 2023, OpenAI created a "Superalignment" team whose goal was to steer and control future AI systems that could be so powerful they could lead to human extinction. Less than a year later, that team is dead. In the summer of 2023, OpenAI created a "Superalignment" team whose goal was to steer and control future AI systems that could be so powerful they could lead to human extinction. Less than a year later, that team is dead.

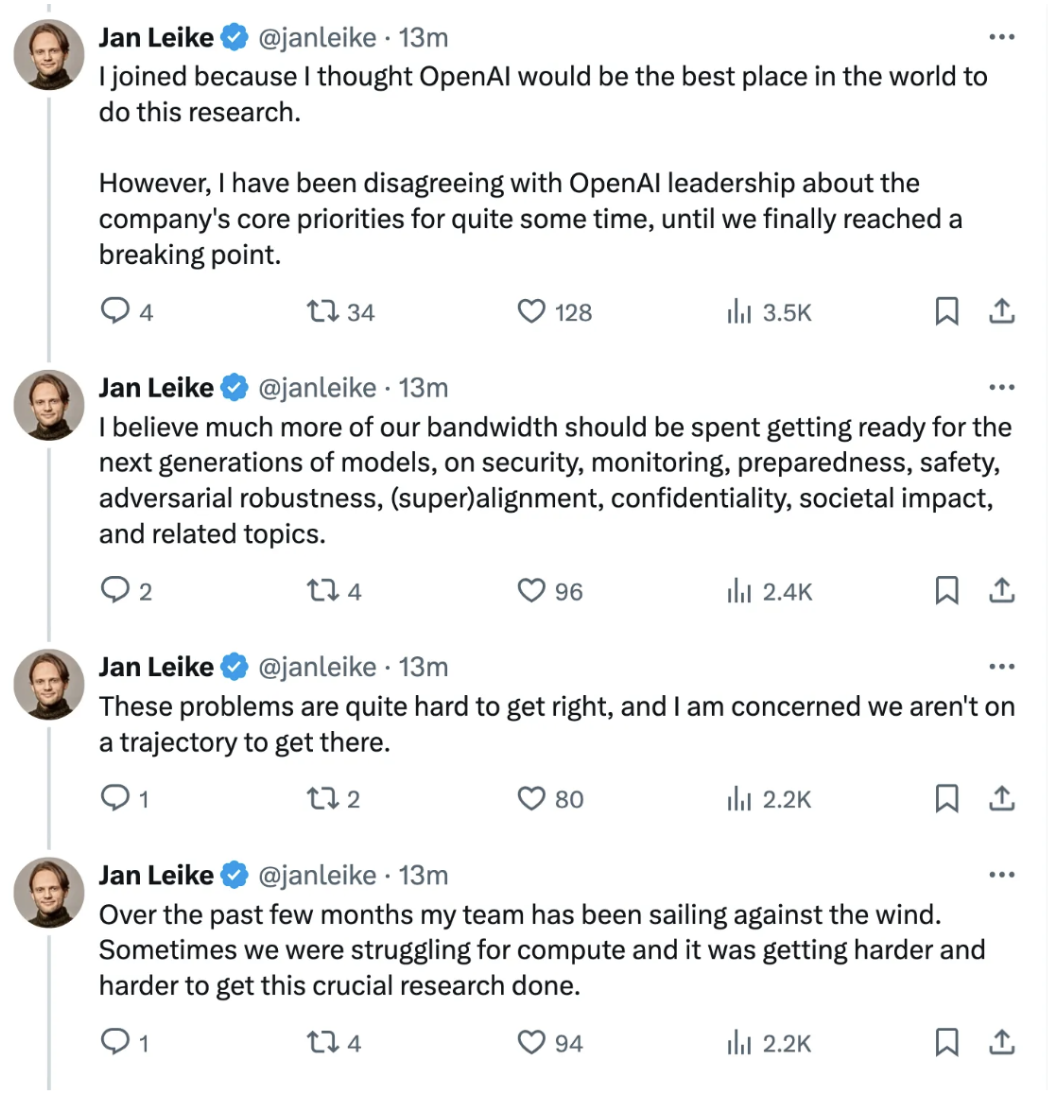

OpenAI told Bloomberg that the company was "integrating the group more deeply across its research efforts to help the company achieve its safety goals." But a series of tweets from Jan Leike, one of the team's leaders who recently quit revealed internal tensions between the safety team and the larger company.

In a statement posted on X on Friday, Leike said that the Superalignment team had been fighting for resources to get research done. "Building smarter-than-human machines is an inherently dangerous endeavor," Leike wrote. "OpenAI is shouldering an enormous responsibility on behalf of all of humanity. But over the past years, safety culture and processes have taken a backseat to shiny products." OpenAI did not immediately respond to a request for comment from Engadget.

X

Leike's departure earlier this week came hours after OpenAI chief scientist Sutskevar

|

|